White Paper

Mastering the Last Mile: Cross-Platform Comparison of LLM Post-Training Optimization

Discover practical, data-backed techniques to fine-tune your models, save on resources, and deliver better AI-driven results in production.

Key Insights

Dive deep into LLM post-training optimization with proven techniques like Supervised Fine-Tuning (SFT), RLHF (PPO, GRPO), RLAIF, and more.

Discover which platforms — Databricks, AWS SageMaker, Colabs/Kaggle + Unsloth — deliver the highest performance for your fine-tuned LLMs, based on real-world experimentation and trade-offs.

Based on real-world trade-offs, learn how to select the right optimization method to actively develop your model, dataset, and compute runtime.

Learn how to achieve optimal LLM performance while controlling costs, ensuring efficiency and scalability in production environments.

Get actionable results from practical experiments, helping you to fine-tune models effectively for production use.

Reduce time to value by implementing the strategies that accelerate LLM deployment and deliver tangible business outcomes.

Download Now

Who should read this white paper

As organizations increasingly deploy AI models at scale, fine-tuning them to meet specific business and operational needs is more critical than ever. In 2025, AI is expected not only to perform accurately but to deliver measurable business outcomes, from enhancing customer experience to optimizing workflows and enabling automation. The challenge is about effectively optimizing them for production use, and post-training optimization is key to achieving this. With this document, you will explore the latest techniques, platforms, and strategies for fine-tuning LLMs to drive real-world results.

AI Engineers and Technical Leads

Understand the technical details of post-training optimization and the strategies that will maximize model performance while minimizing execution risk.

Machine Learning Architects

Learn how to optimize your LLMs with a focus on the trade-offs between platform selection, cost, and scaling for production.

Data Science teams

Gain a clear understanding of advanced model fine-tuning techniques and their impact on end-to-end model performance.

Content

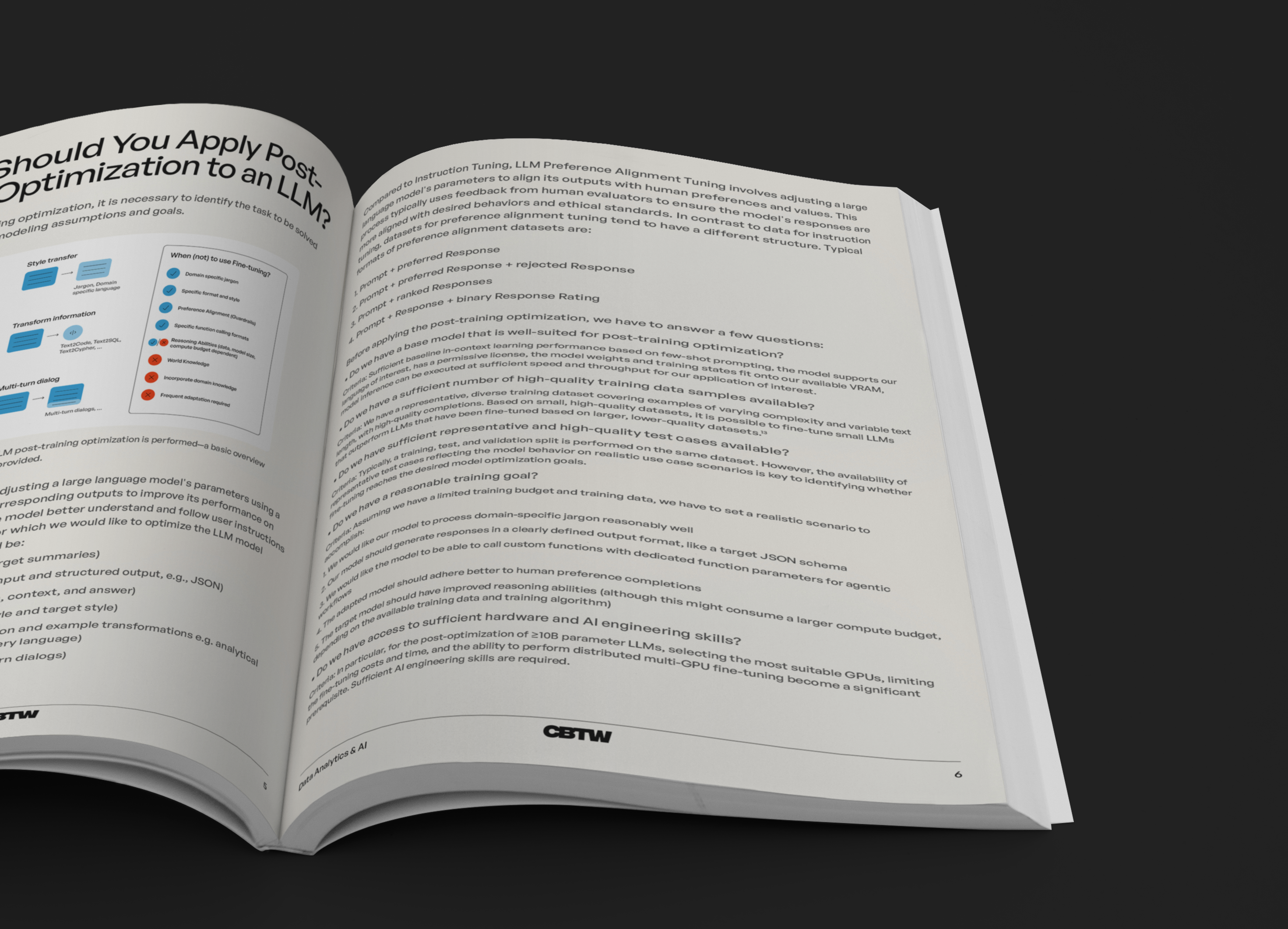

- When Should You Apply Post-Training Optimization to an LLM?

- Key Techniques for Post-Training Optimization

- Selecting the Right Model for Your Use Case

- LLMs in RAG Systems: What Can Be Optimized?

- Generating Synthetic Training Data for Optimization

- Cross-Platform Comparison of Optimization Tools

- Conclusion and Takeaways

- References